Three Common Digital Accessibility Barriers to Fix Today

Sidra Mahmood

July 27, 2021

The Code for Canada fellowship program is no longer active. To learn more, visit our fellowship yearbook.

Users are part and parcel of user-centred product design. They are the customer and the lifeblood of a business. For any product to be successful, it must centre user needs early in the product ideation stage. This user research ideally starts early, is iterated on regularly, and builds the foundation for the product.

In the case of the government, our user profile is a little different. Within the Government of Canada, our customer is often everybody; people from all ages and life stages. They use our products for essential services like applying for employment insurance. They rely on us during key life events like immigrating to Canada or registering the birth of a child. These services have multiple moving parts — including legislation, policy, strategic direction, financial considerations, political climate, operational requirements, human resources, electoral priorities, content design, communications, bilingual translation, and more.

Given the complexity of government services, there are checks and balances to ensure that important things aren’t left out. Policy legislation ensures that government employees stick with rigid guidelines and approval processes. These are not limited to; but include:

All these (necessary) formalities mean that it can take a lot longer to deploy a solution than it would take in the private sector. User research in civic tech is tricky to perform because of the scale of users, the number of stakeholders and implicated organizational structures, and mistrust of the government. Unfortunately, these processes are slow to adapt — techniques and processes don’t always come with up-to-date regulatory requirements. The pace at which things change across government can render an environment that can sometimes feel hostile to user-centred, agile product design. This pace, however, is an opportunity to innovate in non-traditional ways to access key user insights. This is the user research that should inform the delivery of digital products that our population uses.

To be clear, the techniques I will share in this article are meant to be an accompaniment to standard user research practices and not an alternative. They’re great when you as a designer might be in a bind due to specific bureaucratic constraints or urgent delivery deadlines. They will work for when you need some elementary insights to drive your product idea, but they are not an absolute substitute for actual research.

So how does one, as a designer within government, create user-centred products without direct access to users?

Before starting from zero, it can be helpful to ask around your organization to see if user research activities are currently, or have been completed in the past. Ask for access to reports and the raw data that informed those reports, although this may not always be possible. Collect artifacts that can inform your work to an extent, like service metrics or user surveys. The existing work may not be especially relevant to your current product, but there is always something to learn.

If you’re not friends with your data analytics team, now’s a good time to get friendly. Ask if they have analytics running, and if you can get potential access to them. Digital analytics can tell us a lot if you know where to look. For example, a particularly high bounce rate on a page might give you a sense that the page might not be relevant to the search query that took the user there.

Look at case studies from products other governments or civic tech organizations have created. Many civic tech groups meet remotely to showcase solutions they make — you might find a group working on a similar product solving a problem your users have. Conferences like Inclusive Product Week hosted by Code for San Jose often publish keynote videos post-even on their website that are available for anyone to learn from.

Within civic tech, there is typically a welcoming community that looks at failure as an opportunity to learn. Civic tech enthusiasts tend to be open about the realities within which they are designing services and products. Participating in meetup groups and community events is a great way to stay in the loop about the ways in which we can excel in designing digital government services.

Look outside the government as well. There is extensive academic literature and policy material widely available that looks at general user behaviours and patterns with digital services.

Good user research doesn’t have to be formal. Social media is one of the best places to learn more about how our users experience a product. When you work for the government, Reddit, Facebook, TikTok and Twitter are goldmines of insight. A search for “service canada” offers a truly eye-opening and sometimes scathing review of the government services we provide. We can’t be afraid of honesty, and we shouldn’t take it personally. We can better learn about people’s fears when it comes to applying for government services by meeting them where they’re at.

As with all research, observational research on social media does come with ethical implications. You may find some resistance within your organization given that social media research is a relatively novel approach to qualitative analysis — and may fall out of scope of your existing organizational research guidelines.

The American Psychological Association has provided five principles for consideration when performing social media research — applicable to this type of research are principles of informed consent, confidentiality, and privacy. Social media analysis can potentially violate some of these considerations, and some risks can be mitigated by:

As with all forms of research, there are limitations to these methods. Social media networks vary greatly in their distribution of demographics — some are skewed towards higher income people, while others might be more targeted towards youth — and all of them require a specific level of technical literacy to interact with. According to a Pew Study, 10% of Twitter users create 80% of all tweets. Your social media research should accordingly consider the innate biases and lack of equitable representation inherently present within our passive research practices.

When performing social media research, I typically take screenshots of the post, ensuring that I crop out any identifying information, and save them to a research folder.

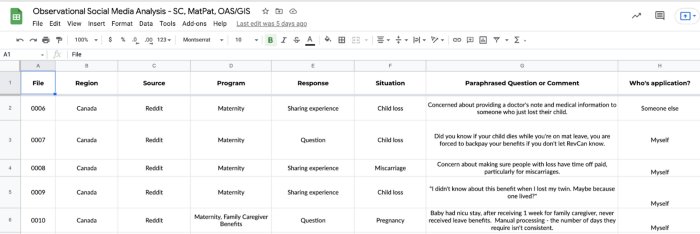

I then paraphrase the folder of images into a spreadsheet with tags for further analysis. I tried to tag whether the query seemed to be on the behalf of the author or on behalf of someone else. I also add a region if available (provincial or national), the source of the post, what EI program the query pertains to, and what keywords might be applicable to their situation (i.e. pregnancy, retirement, or low income).

Finally, I aggregate my research into some basic metrics to better understand how to potentially prioritize product requirements. I used an informal word cloud on the 50 top referenced keywords to better understand the scale at which some subjects may be of more concern than others.

Within this particular sample set, for example, we discovered that 1 in 5 of our data points was looking for information on behalf of someone else (e.g. an elderly parent), which gives us insight into how we should be designing service access to serve not just individuals directly, but the people they might be supporting in their life journeys.

The case for performing passive research within government lies within authority bias. Research shows that people generally have a “[deep-seated] duty to authority” (Milgram, S. (1963).). They are less likely to complain about the government TO the government. There is a fear that it may impact their own benefits somehow. There are guidelines around compensation and recruitment as well which can inhibit participation. Passive research allows us to better understand the true sentiment that we might not always capture with a phone call or in-person interview.

The opposite can also be true in some cases — a lack of trust, particularly within communities experiencing historical (and in many cases, ongoing) marginalization within Canada — can have an impact on formal user research participation rates and diversity.

Governments can sometimes treat user research, testing and usability as the same thing when they are different, and serve dramatically different purposes.

User research should take center stage prior to the production of the product. It’s an opportunity to better understand the basic bounds within which you should be designing. Some good questions to answer here are:

Don’t jump into user testing early — or perish the thought — use it to replace user research. Create a product that’s worthy of user-testing first. A considerable practice is our user research and testing methods to take a page out of pharmacology. When vaccines are being developed globally, they have to meet minimum quality and safety standards before they can be given to human research participants — let alone distributed widely.

As designers, our preliminary research should inform the design of an optimal product based on our informed predictions so that we can have something worthy of testing. User testing can be time and resource-intensive, so optimizing the time we have with our users lies in ensuring we’re asking the right questions — the questions we haven’t been able to answer yet.

Ensure interactions match what the user might expect — time with the external user is valuable and should be based on actionable tasks that can be measured in a quantifiable way. Here’s an example:

I encourage you as a designer to speak up when you sense that user research might be conflated with user testing within your organization.

In summary, user-driven design within government can be a complex beast to navigate because of bureaucratic and regulatory guidelines. The stakes are high given that the services we design impact the vast majority of the population. Many government agencies are currently in the early stages of digital transformation, which means that it’s an optimal time to start introducing user-driven and agile design methods to product delivery. As designers, it is in our best interest to get creative and resourceful when we run up against barriers. We can explore non-traditional channels for user research to subsequently create digital government services people use because they want to, and not because they have to.

Want Code for Canada's support conducting user research in government? Fill out a form on our inclusive user research page.

End of articles list